|

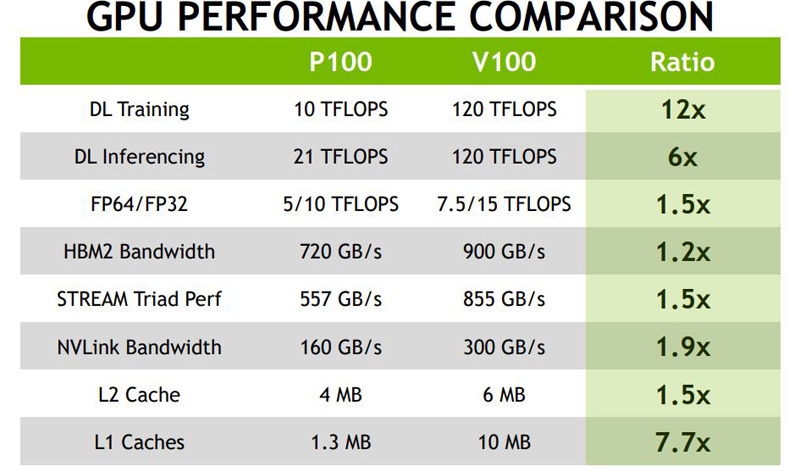

Consolidation of cores of CUDA and Volta Tensor in the unified architecture, one server on GPU Tesla V100 platform will be able to replace hundreds of central processors in the field of high-performance computing. Is Ford trolling Tesla with this surprise electric pick up feature. On the Nvidia company provided architecture of the graphic processors Volta and a series of the hardware-software developments focused on acceleration of work with the systems of artificial intelligence.Īccording to Jensen Huanga's statement of CEO Nvidia, Volta will become the standard of high-performance computing. AMD halved the memory from 32GB to 16GB, reduced FP64 performance (which is useful. Supermicro - the product line with support of new GPU Volta includes workstation 7048GR-TR for high-performance GPU calculations, servers 4028GR-TXRT, 4028GR-TRT and 4028GR-TR2 for the most exacting applications of deep learning and servers 1028GQ-TRT for, for example, difficult analytical tasks.īesides, a number of the partner systems was replenished with solutions of the Chinese producers, including Inspur, Lenovo and Huawei which announced systems based on architecture of Volta for data centers of the Internet companies.IBM - servers of the next generation of IBM Power Systems based on the Power9 processor with support of several GPU V100 and NVLink technology with superfast interconnect of GPU-to-GPU and CPU-to-GPU OpenPOWER for fast data transmission.HPE - HPE Apollo 6500 with support to eight GPU V100 for PCIe and HPE ProLiant D元80 with support to three GPU V100 for PCIe.Dell EMC - PowerEdge R740 with support to three GPU V100 for PCIe, PowerEdge R740XD with support to three GPU V100 for PCIe and PowerEdge C4130 with support to four V100 for PCIe or four GPU V100 for Nvidia NVLink in SXM2 form factor.The following systems based on V100 are announced: One GPU Volta provides performance equivalent 100 CPU, allowing scientists, researchers and engineers to solve problems which solution was presented too difficult or impossible earlier. The multiprocessor systems of vendors based on V100 will open for users ample opportunities of GPU Nvidia for acceleration of researches to areas AI and creations of products and services in this area.Īs specified in Nvidia, the graphic processors Nvidia V100 which capacity in problems of deep learning exceeds 120 TFLOPS, accelerations of analytics and other resource-intensive computing tasks are created especially for deep learning of neuronets and an inferens, high-performance computing. Nvidia and partners of the company Dell EMC Hewlett Packard Enterprise, IBM and Supermicro provided on Septemmore than ten servers based on Tesla V100 GPU accelerators with architecture of Nvidia Volta. Volta GV100 Streaming Multiprocessor, (2017)Ģ017 Solutions of partners based on Nvidia Volta for AI The table of results of performance Tesla V100 in comparison with accelerators of Tesla of the previous generation. According to Nvidia, hundreds of thousands of GPU accelerated applications are available to different hard tasks, including training of neuronets and inferens, high-performance computing, graphics and difficult data analysis. GPU V100 go complete with the software optimized under Volta, including CUDA 9.0 and SDK for deep learning which includes TensorRT 3, DeepStream SDK and cuDNN 7 and also all main AI frameworks. format (sometimes called FP64 or float64) is a computer number format. Peak capacity of Volta is five times higher than architecture of Pascal - the operating graphic architecture of NVIDIA, and 15 times above Maxwell. Being Built Teslas production cost per vehicle is 42 of what it was. Multi-instance GPU (MIG) ensures quality of service (QoS) with secure, hardware-partitioned, right-sized GPUs across all of these workloads for diverse users, optimally utilizing GPU compute resources.Each GPU Nvidia V100 includes 21 billion transistors (providing performance tasks in deep learning, equivalent 100 CPU), 640 Tensor-cores, HBM2 NVLink and DRAM 900GB/with technology that provides a 50% gain of performance in comparison with GPU of the previous generation.

With TF32 and FP64 Tensor Core support, as well as an end-to-end software and hardware solution stack, A30 ensures that mainstream AI training and HPC applications can be rapidly addressed. The NVIDIA A30 Tensor Core GPU delivers a versatile platform for mainstream enterprise workloads, like AI inference, training, and HPC. By combining fast memory bandwidth and low-power consumption in a PCIe form factor optimized for mainstream servers, A30 enables an elastic data center and delivers maximum value for enterprises. With NVIDIA Ampere architecture Tensor Cores and Multi-Instance GPU (MIG), it delivers speedups securely across diverse workloads, including AI inference at scale and high-performance computing (HPC) applications. Versatile Compute Acceleration for Mainstream Enterprise Serversīring accelerated performance to every enterprise workload with NVIDIA A30 Tensor Core GPUs.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed